Over the past years, we have seen a proliferation of vulnerabilities affecting many organisations.

Vulnerabilities in application security, cloud security and traditional infrastructure security are challenging to find in an environment lacking a risk-scored asset register.

So what is the challenge in addressing vulnerabilities as they come?

Many factors:

- Fixing application and cloud security vulnerabilities in the first instance is challenging and requires a plan.

- Usually, when first assessed, a system, repository, or library has tons of vulnerabilities, and the task seems overwhelming.

- When deciding what to fix, the scanner does not return contextual information (where the problem is, how easy it is to exploit, how frequently it gets exploited)

- Operational requirements often demand a very specific time window to maintain operational efficiency and SLA uptime.

- Fixing bugs and upgrading applications and cloud security systems is always fighting for resources against creating new value and products.

- Usually, vulnerabilities get detected at the end of a production/development lifecycle (when the system needs to be pushed to production). Security assessments usually cause a lot of pain as changes are very complex and painful at this stage.

So what is the solution to all this? Having a crafted plan and objectives to fix more urgent vulnerabilities first.

But how do we define which vulnerabilities are more urgent? Cybersecurity vulnerabilities in the modern stacks are complex to evaluate as they could affect different parts of a modern technology stack:

- Vulnerabilities in code for application security

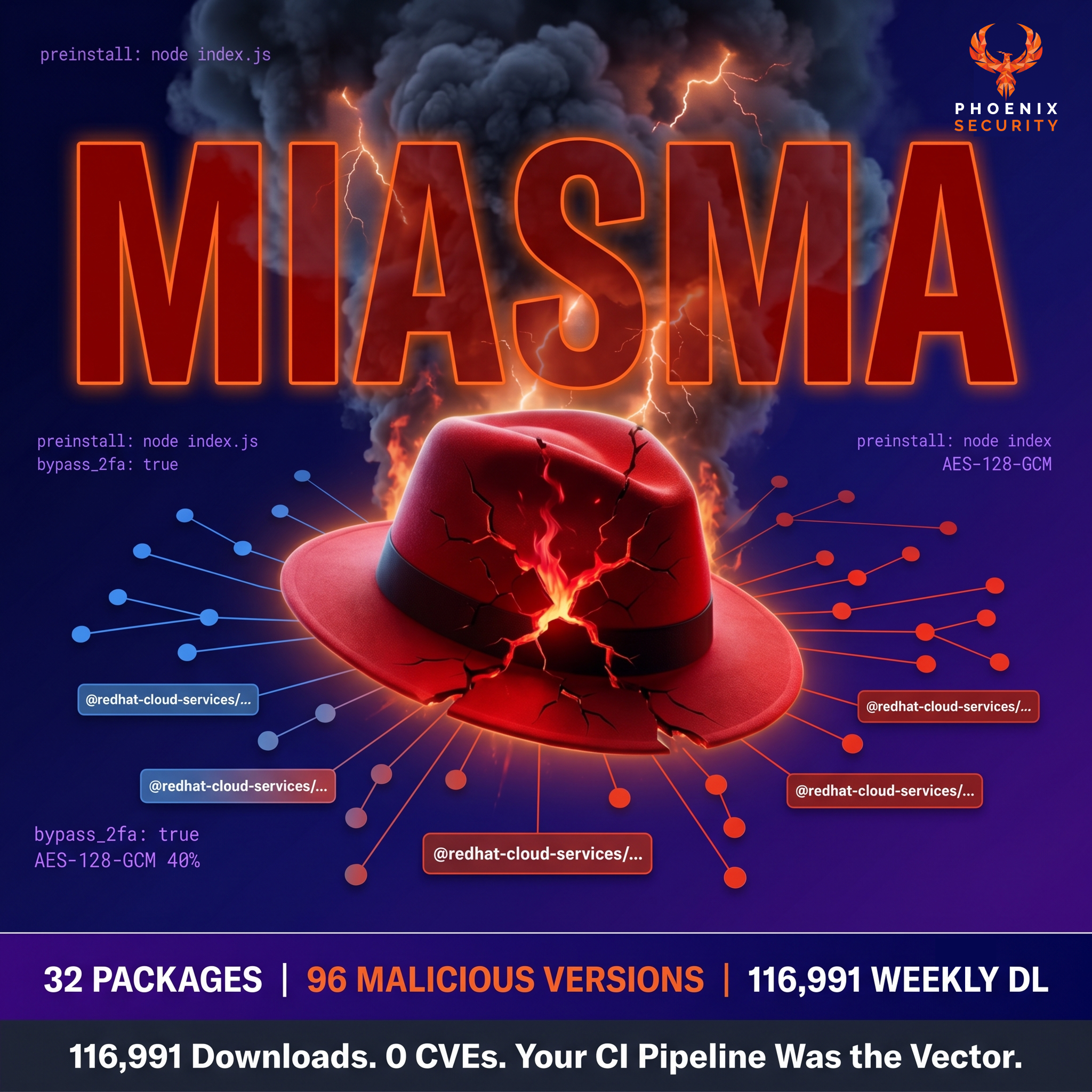

- Vulnerabilities in Open source libraries for application security

- Vulnerabilities in Runtime environments (like java) or libraries for a runtime environment (like log4j) for application security

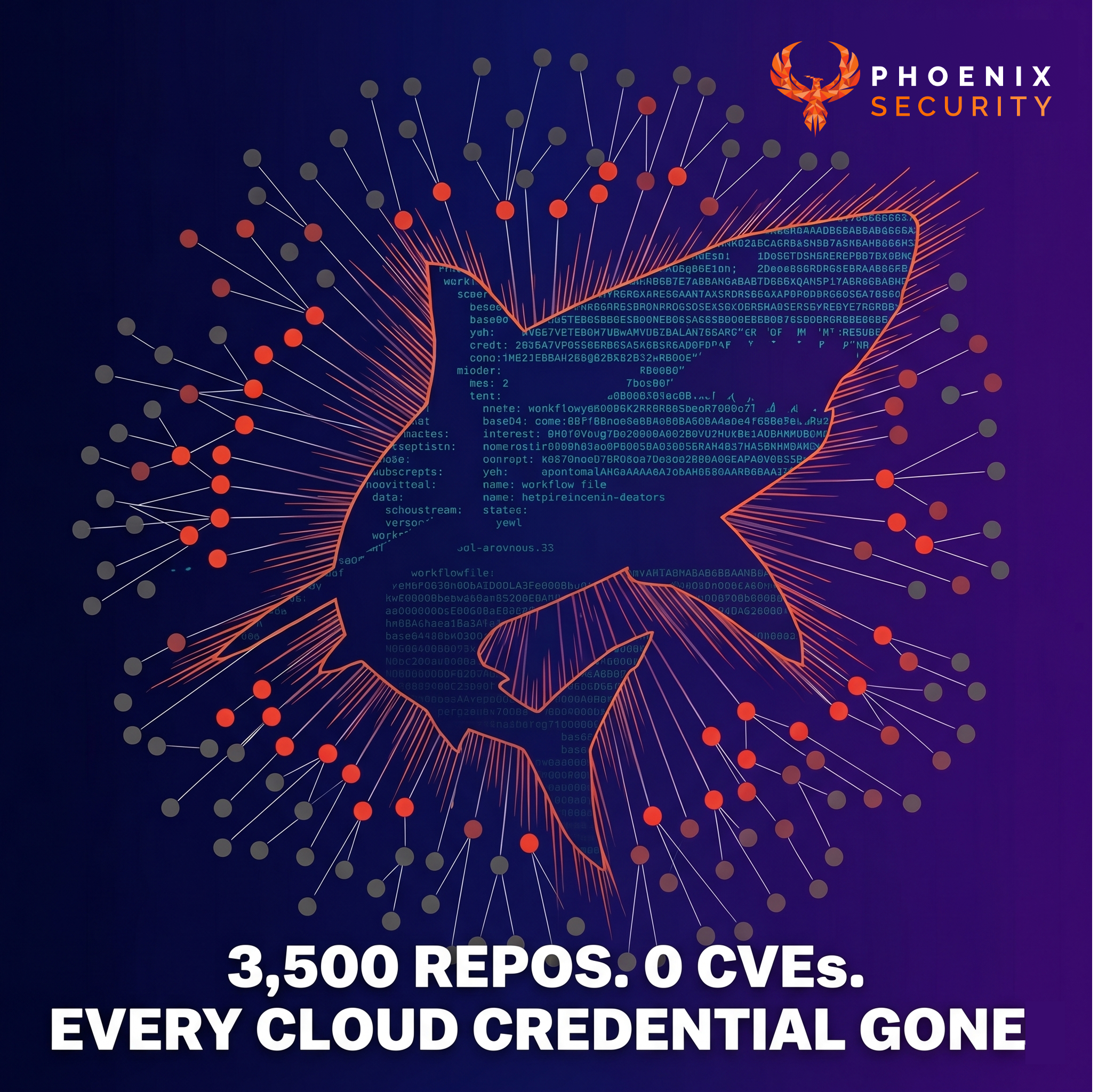

- Vulnerabilities in Operating systems for infrastructure and cloud security

- Vulnerabilities to Containers for infrastructure and cloud security

- Vulnerabilities to Supporting Systems (like Kubernetes control plane) for infrastructure and cloud security

- Vulnerabilities or Misconfiguration in cloud systems for infrastructure and cloud security

So how to select amongst all of them? Usually, the answer is triaging the vulnerabilities. Normally, those triaging the vulnerabilities are security champions collaborating with the development or security teams.

Security teams usually struggle with time and scale and have to select amongst tons of vulnerabilities without much time to analyse threats and determine which vulnerabilities are more at risk.

The answer to this problem is usually never simple; it often requires risk assessment and collaboration with the business to allocate enough time and resources to plan for resolution and ongoing assessment. This methodology has been proven to be one of the most efficient methodologies to tackle vulnerabilities at scale (https://www.gartner.com/smarterwithgartner/how-to-set-practical-time-frames-to-remedy-security-vulnerabilities)

The risk and severity of vulnerabilities are very different but are often used interchangeably.

- Risk = probability of an event occurring * Impact of an event occurring

- The severity usually is linked to CVSS analysis of the damage a vulnerability could cause

What’s wrong with risk assessment of vulnerabilities as it stands?

- Not Actionable risk – Risk scoring is usually based on CVSS base and/or temporal score. Those scores are not the true reflection of the cloud security risk or application security risk.

- Not Real-Time – Risk scoring is traditionally static or on a spreadsheet assessed and updated based on impact and probability analysis, but those values are often arbitrary and not quantifiable. Modern organisations have a dynamic landscape where the risk constantly changes every hour.

- UN-Contextual – Vulnerability base severity provides a base severity that needs to be tailored to the particular environment where that vulnerability appears. That is particularly complex to determine manually.

- Static Threat intelligence – Organisations consider threat intelligence a separate process when triaging and assessing vulnerabilities. Triaging vulnerabilities by looking at threat intelligence is usually delegated to individuals prone to miscalculation and risk. Moreover, if not curated for specific assets, threat intelligence alerts can be expensive but lead to very little intelligence.

- Not Quantifiable – Risk that is based on data and in real-time is quantifiable. Risk assessed manually by individuals based on opinion is quantitative, not qualitative.

- Not Actionable – Risk scoring and tracking is usually done offline or on a spreadsheet and reviewed quarterly. That can lead to confusion.

Risk is multi-layer and multi-dimensional

To be able to capture all the complexity and nuance that affects risk, we need to consider multiple dimensions. Furthermore, the association and relationships between assets and their vulnerabilities mean that there are multiple layers of aggregation to consider.

For a more extensive discussion and analysis of risk, you can refer to previous articles and white papers:

- How can you quantify cyber risk?

- CVSS does not fit anymore Risk and Context are kings

- Why Prioritize vulnerability? A case for Risk and Contextual-based prioritization

- Whitepaper: Prioritizing Vulnerabilities On Cloud And in Software – CVE and the Land of Broken Dreams

Contents

ToggleRisk Calculation

Risk is calculated in multiple tiers with different factors influencing the risk score at different levels.

At the individual Vulnerability level, we consider:

- Base Severity: This is the base score value provided by scanners and other sources (e.g. CVSS). It focuses on the level of danger posed by a vulnerability, but in isolation of other contextual “modifiers”, which is what we take into account with the following factors.

- Locality: This is the extenability factor of the asset affected by the vulnerability.

- Impact: This factor reflects the criticality of the Application or Environment affected by this vulnerability.

- Probability of exploit: It considers Fixability, EPSS and CTI information.

- Fixability: Where the vulnerability has a know remediation.

- EPSS: We use a factor derived from the vulns EPPS score (if available) to tune the risk up or down depending on the exploitability indicator.

- CTI: This factor captures other CTI sources that might inform the position of this vulnerability with regards to exploitability.

At the Asset level, a different set of factors is taken into account:

- Locality: This factor reflects an asset’s “logical position” and measures whether the asset is fully external, entirely internal or somewhere in between. Here we leverage the aggregation of assets into groups and derive each asset’s locality based on its group.

- Impact: another important contextual element is an asset’s level of impact (or criticality), which would be directly related to the criticality of the service or application to which the asset belongs.

- Density: when aggregating vulnerability risk into higher groups – like an asset – one of the trickiest parts is to avoid diluting the “combined risk” through averages. Our solution is to introduce a density factor that captures the relative number of vulnerabilities affecting the asset.

Components and Services aggregate a number of assets into higher-level units with their own attributes.

- Locality: At this level we look into the aggregated impact of individual assets on the component’s locality. Furthermore, if an Application is deployed, we consider the locality of the environment/service where it is deployed, since that is a better reflection of the externality of the assets.

- Complexity: Some times averages are a dangerous tool to summarise quantifiable information. For to components with the same average asset risk, the fact that one component has a higher number of assets intuitively indicates that its risk should be higher than the one with fewer assets. This is what the Complexity factor tries to capture into the risk calculation: how complex is this component compared to other components.

- Mitigation: Where mitigating controls affect a component, its effective risk is influenced by those controls. This factor considers the presence of any mitigating controls and adjusts the risk accordingly.

Application and Environment risk is a straightforward average of their constituent components. At this stage we have already applied an extensive array of factors to capture a wide range of circumstances affecting risk. Regarding the App/Env level, an average of this component’s risk represents its own risk level.

However, when we get to the Organisation level we need to consider some of the advanced relationships we have in the platform. In particular, when an application is deployed on an environment, we want to consider both elements’ “combined” risk. This is where the Risk Contribution settings for the organisation reflect the relative importance, or weight, of Apps and Envs risk on the final aggregation. Deployed application risk is combined with that of their environment using this “contribution” weights.

Furthermore, any non-deployed applications, and any environments with no application deployed, will contribute to the Organisation’s risk in proportion to the risk contribution weights.

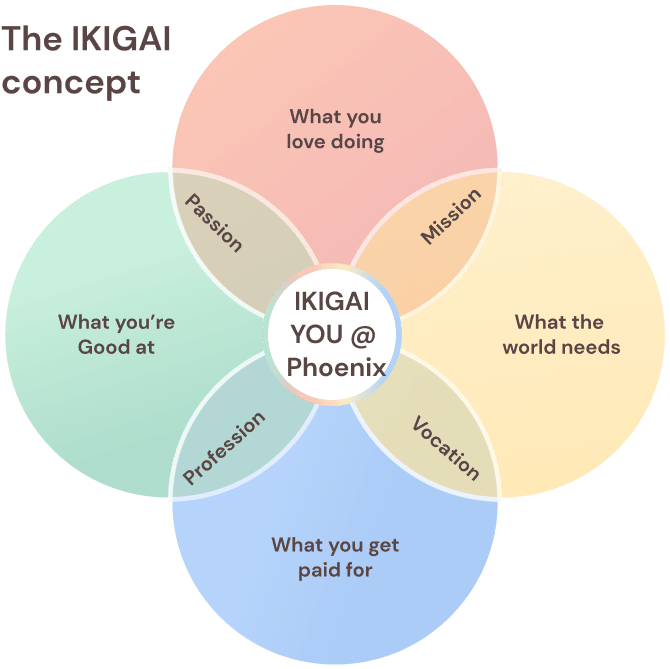

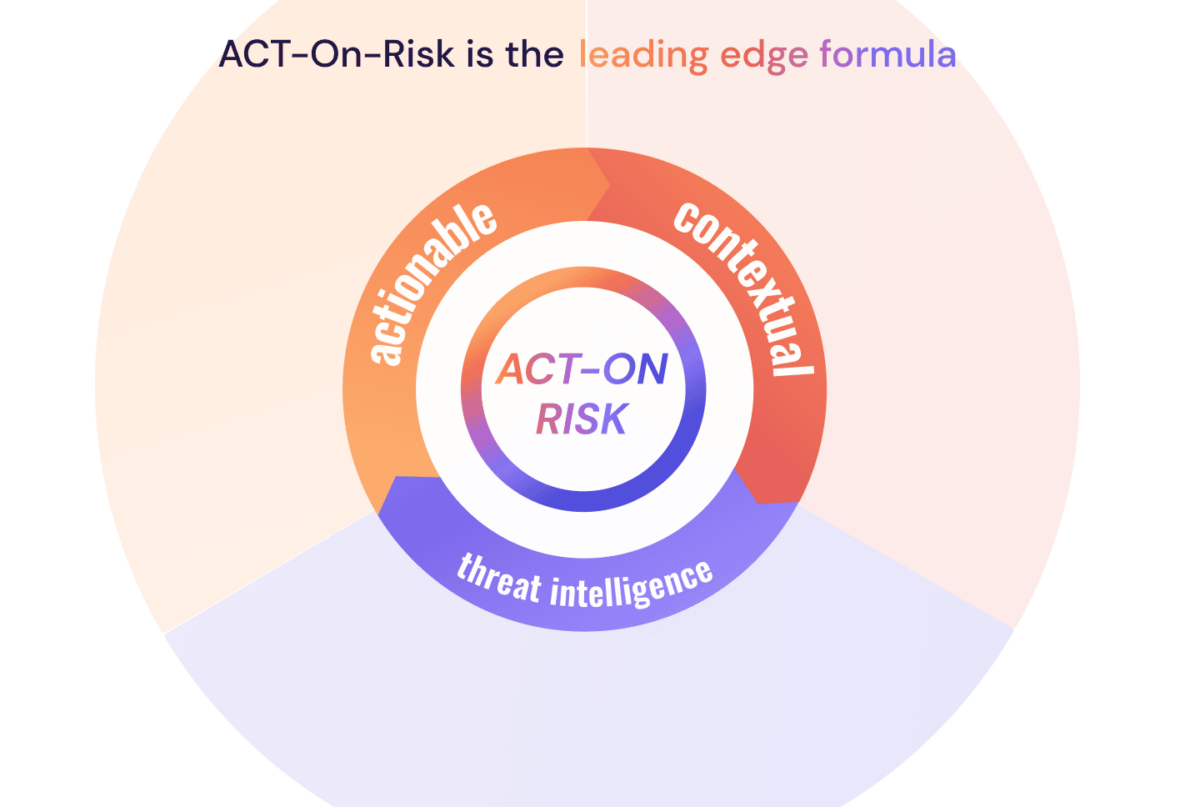

ACT On Risk Contextual, real-time modern risk and posture calculation

Enriching asset and vulnerability context increase visibility, allowing IT security decision-makers the information needed to prioritize security activities while maintaining strong assurances. Without risk context, any approach is a sledgehammer; an arbitrary and ad-hoc security strategy is not a strategy, which increases risk. On the other hand, data-driven decision-making can provide quantified risk scores that are actionable, allowing a company to use a more surgical style approach, calculate contextual risk, prioritize activities, apply resources strategically based on business impact and data-driven probabilities, reduce anxiety and prevent an overwhelmed feeling of unmanageable issues while also providing high-degree security assurance.

Although the number of CVEs published each year has been climbing, placing a burden on analysts to determine the severity and recalculate risk to IT infrastructure, enriching a CVE’s context offers an opportunity to increase IT security team efficiency. For example, in 2021, there were 28,695 vulnerabilities disclosed, but only roughly 4,100 were exploitable, meaning that only 10-15% presented an immediate potential risk. Having this degree of insight offers clear leverage, but how can this degree of insight be gained?

Automating The Whole Process

While operational efficiencies can be gained through the accurate contextualisation of cyber-risk to prioritize vulnerabilities more effectively, operational efficiencies can also be gained by automating the process of collecting related data and calculating a set of contextual risk scores for a particular system, sub-group of systems, or IT infrastructure across an entire enterprise.

Enriching CVE data is prohibitively time-consuming and complex so delegating this task to an IT security team detracts from actual remediation activities. Instead, by presenting human analysts and IT security administrators with an easily accessible list of security priorities by risk score, they can focus on the triage processes such as remediation, responding, developing adjusting controls and policies, or developing training programs.

Conclusion

No matter how risk is calculated, it should be dynamic, automated and based on more threat signals.

Security teams are overly stretched to cover and do a manual assessment. 54% of security champions and professionals are considering quitting due to the overwhelming complexity and tediousness of some of the tasks that can be automated.

New security professionals struggle to get into triaging as the knowledge barrier to assess vulnerabilities is incredibly high.

The more the assessment process can be automated, the better it is. This methodology makes the job of security professionals who can act in a consultative way and scale much better.

Scaling the security team and ensuring that security champions and vulnerability management teams can help to decide how to fix instead of trying to triage and decide what to fix.

How Can Phoenix Security Help

Phoenix Security is a SaaS platform that ingests security data from multiple tools, cloud, applications, containers, infrastructure, and pentest.

The Phoenix Security platform Deduplicate correlates, contextualises and shows risk profile, the potential financial impact of applications, and where they run.

The Phoenix Security platform enables risk-based assessment, reporting and alerting on risk linked to contextual, quantifiable real-time data.

The business can set risk-based targets to drive resolution, and the platform will deliver a clean, precise set of actions to remediate the vulnerability for each development team updated in real-time with the probability of exploitation.

Phoenix Security enables the security team to scale and deliver the most critical vulnerability and misconfiguration to work on directly in the backlog of development teams.

Request a demo today here